As AI evolves rapidly, the emergence of GenAI technologies such as GPT models has sparked a novel and critical role: prompt engineering. This specialised function is becoming indispensable in optimising the interaction between humans and AI, serving as a bridge that translates human intentions into prompts that guide AI to produce desired outcomes. In this Ecosystm Insight, I will explore the importance of prompt engineering, highlighting its significance, responsibilities, and the impact it has on harnessing AI’s full potential.

Understanding Prompt Engineering

Prompt engineering is an interdisciplinary role that combines elements of linguistics, psychology, computer science, and creative writing. It involves crafting inputs (prompts) that are specifically designed to elicit the most accurate, relevant, and contextually appropriate responses from AI models. This process requires a nuanced understanding of how different models process information, as well as creativity and strategic thinking to manipulate these inputs for optimal results.

As GenAI applications become more integrated across sectors – ranging from creative industries to technical fields – the ability to effectively communicate with AI systems has become a cornerstone of leveraging AI capabilities. Prompt engineers play a crucial role in this scenario, refining the way we interact with AI to enhance productivity, foster innovation, and create solutions that were previously unimaginable.

The Art and Science of Crafting Prompts

Prompt engineering is as much an art as it is a science. It demands a balance between technical understanding of AI models and the creative flair to engage these models in producing novel content. A well-crafted prompt can be the difference between an AI generating generic, irrelevant content and producing work that is insightful, innovative, and tailored to specific needs.

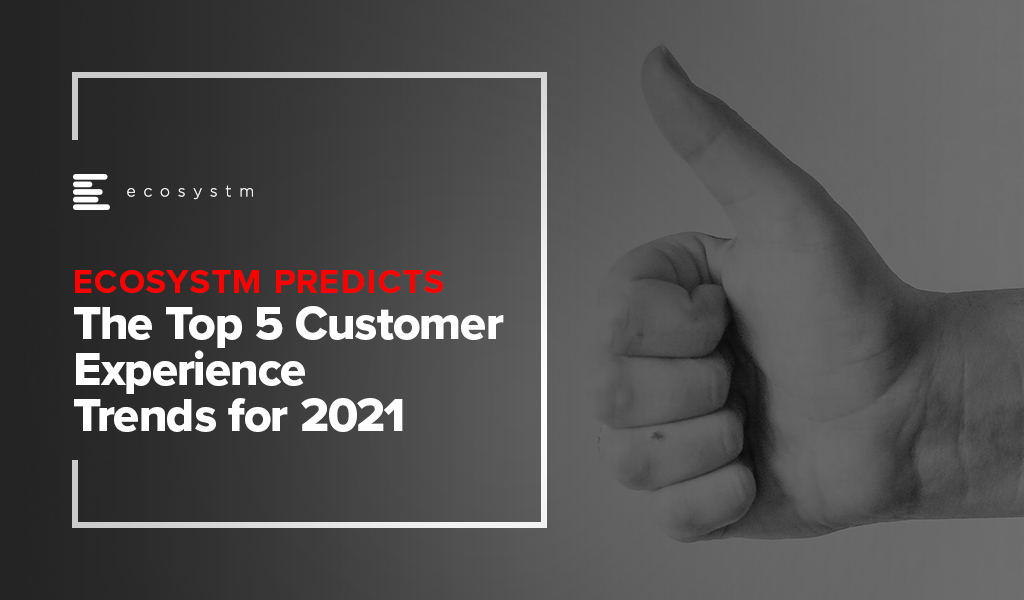

Key responsibilities in prompt engineering include:

- Prompt Optimisation. Fine-tuning prompts to achieve the highest quality output from AI models. This involves understanding the intricacies of model behaviour and leveraging this knowledge to guide the AI towards desired responses.

- Performance Testing and Iteration. Continuously evaluating the effectiveness of different prompts through systematic testing, analysing outcomes, and refining strategies based on empirical data.

- Cross-Functional Collaboration. Engaging with a diverse team of professionals, including data scientists, AI researchers, and domain experts, to ensure that prompts are aligned with project goals and leverage domain-specific knowledge effectively.

- Documentation and Knowledge Sharing. Developing comprehensive guidelines, best practices, and training materials to standardise prompt engineering methodologies within an organisation, facilitating knowledge transfer and consistency in AI interactions.

The Strategic Importance of Prompt Engineering

Effective prompt engineering can significantly enhance the efficiency and outcomes of AI projects. By reducing the need for extensive trial and error, prompt engineers help streamline the development process, saving time and resources. Moreover, their work is vital in mitigating biases and errors in AI-generated content, contributing to the development of responsible and ethical AI solutions.

As AI technologies continue to advance, the role of the prompt engineer will evolve, incorporating new insights from research and practice. The ability to dynamically interact with AI, guiding its creative and analytical processes through precisely engineered prompts, will be a key differentiator in the success of AI applications across industries.

Want to Hire a Prompt Engineer?

Here is a sample job description for a prompt engineer if you think that your organisation will benefit from the role.

Conclusion

Prompt engineering represents a crucial evolution in the field of AI, addressing the gap between human intention and machine-generated output. As we continue to explore the boundaries of what AI can achieve, the demand for skilled prompt engineers – who can navigate the complex interplay between technology and human language – will grow. Their work not only enhances the practical applications of AI but also pushes the frontier of human-machine collaboration, making them indispensable in the modern AI ecosystem.

AI has become a business necessity today, catalysing innovation, efficiency, and growth by transforming extensive data into actionable insights, automating tasks, improving decision-making, boosting productivity, and enabling the creation of new products and services.

Generative AI stole the limelight in 2023 given its remarkable advancements and potential to automate various cognitive processes. However, now the real opportunity lies in leveraging this increased focus and attention to shine the AI lens on all business processes and capabilities. As organisations grasp the potential for productivity enhancements, accelerated operations, improved customer outcomes, and enhanced business performance, investment in AI capabilities is expected to surge.

In this eBook, Ecosystm VP Research Tim Sheedy and Vinod Bijlani and Aman Deep from HPE APAC share their insights on why it is crucial to establish tailored AI capabilities within the organisation.

In my last Ecosystm Insights, I spoke about the implications of the shift from Predictive AI to Generative AI on ROI considerations of AI deployments. However, from my discussions with colleagues and technology leaders it became clear that there is a need to define and distinguish between Predictive AI and Generative AI better.

Predictive AI analyses historical data to predict future outcomes, crucial for informed decision-making and strategic planning. Generative AI unlocks new avenues for innovation by creating novel data and content. Organisations need both – Predictive AI for enhancing operational efficiencies and forecasting capabilities and Generative AI to drive innovation; create new products, services, and experiences; and solve complex problems in unprecedented ways.

This guide aims to demystify these categories, providing clarity on their differences, applications, and examples of the algorithms they use.

Predictive AI: Forecasting the Future

Predictive AI is extensively used in fields such as finance, marketing, healthcare and more. The core idea is to identify patterns or trends in data that can inform future decisions. Predictive AI relies on statistical, machine learning, and deep learning models to forecast outcomes.

Key Algorithms in Predictive AI

- Regression Analysis. Linear and logistic regression are foundational tools for predicting a continuous or categorical outcome based on one or more predictor variables.

- Decision Trees. These models use a tree-like graph of decisions and their possible consequences, including chance event outcomes, resource costs and utility.

- Random Forest (RF). An ensemble learning method that operates by constructing a multitude of decision trees at training time to improve predictive accuracy and control over-fitting.

- Gradient Boosting Machines (GBM). Another ensemble technique that builds models sequentially, each new model correcting errors made by the previous ones, used for both regression and classification tasks.

- Support Vector Machines (SVM). A supervised machine learning model that uses classification algorithms for two-group classification problems.

Generative AI: Creating New Data

Generative AI, on the other hand, focuses on generating new data that is similar but not identical to the data it has been trained on. This can include anything from images, text, and videos to synthetic data for training other AI models. GenAI is particularly known for its ability to innovate, create, and simulate in ways that predictive AI cannot.

Key Algorithms in Generative AI

- Generative Adversarial Networks (GANs). Comprising two networks – a generator and a discriminator – GANs are trained to generate new data with the same statistics as the training set.

- Variational Autoencoders (VAEs). These are generative algorithms that use neural networks for encoding inputs into a latent space representation, then reconstructing the input data based on this representation.

- Transformer Models. Originally designed for natural language processing (NLP) tasks, transformers can be adapted for generative purposes, as seen in models like GPT (Generative Pre-trained Transformer), which can generate coherent and contextually relevant text based on a given prompt.

Comparing Predictive and Generative AI

The fundamental difference between the two lies in their primary objectives: Predictive AI aims to forecast future outcomes based on past data, while Generative AI aims to create new, original data that mimics the input data in some form.

The differences become clearer when we look at these examples.

Predictive AI Examples

- Supply Chain Management. Analyses historical supply chain data to forecast demand, manage inventory levels, reduces costs and improve delivery times.

- Healthcare. Analysing patient records to predict disease outbreaks or the likelihood of a disease in individual patients.

- Predictive Maintenance. Analyse historical and real-time data and preemptively identifies system failures or network issues, enhancing infrastructure reliability and operational efficiency.

- Finance. Using historical stock prices and indicators to predict future market trends.

Generative AI Examples

- Content Creation. Generating realistic images or art from textual descriptions using GANs.

- Text Generation. Creating coherent and contextually relevant articles, stories, or conversational responses using transformer models like GPT-3.

- Chatbots and Virtual Assistants. Advanced GenAI models are enhancing chatbots and virtual assistants, making them more realistic.

- Automated Code Generation. By the use of natural language descriptions to generate programming code and scripts, to significantly speed up software development processes.

Conclusion

Organisations that exclusively focus on Generative AI may find themselves at the forefront of innovation, by leveraging its ability to create new content, simulate scenarios, and generate original data. However, solely relying on Generative AI without integrating Predictive AI’s capabilities may limit an organisation’s ability to make data-driven decisions and forecasts based on historical data. This could lead to missed opportunities to optimise operations, mitigate risks, and accurately plan for future trends and demands. Predictive AI’s strength lies in analysing past and present data to inform strategic decision-making, crucial for long-term sustainability and operational efficiency.

It is essential for companies to adopt a dual-strategy approach in their AI efforts. Together, these AI paradigms can significantly amplify an organisation’s ability to adapt, innovate, and compete in rapidly changing markets.

Google recently extended its Generative AI, Bard, to include coding in more than 20 programming languages, including C++, Go, Java, Javascript, and Python. The search giant has been eager to respond to last year’s launch of ChatGPT but as the trusted incumbent, it has naturally been hesitant to move too quickly. The tendency for large language models (LLMs) to produce controversial and erroneous outputs has the potential to tarnish established brands. Google Bard was released in March in the US and the UK as an LLM but lacked the coding ability of OpenAI’s ChatGPT and Microsoft’s Bing Chat.

Bard’s new features include code generation, optimisation, debugging, and explanation. Using natural language processing (NLP), users can explain their requirements to the AI and ask it to generate code that can then be exported to an integrated development environment (IDE) or executed directly in the browser with Google Colab. Similarly, users can request Bard to debug already existing code, explain code snippets, or optimise code to improve performance.

Google continues to refer to Bard as an experiment and highlights that as is the case with generated text, code produced by the AI may not function as expected. Regardless, the new functionality will be useful for both beginner and experienced developers. Those learning to code can use Generative AI to debug and explain their mistakes or write simple programs. More experienced developers can use the tool to perform lower-value work, such as commenting on code, or scaffolding to identify potential problems.

GitHub Copilot X to Face Competition

While the ability for Bard, Bing, and ChatGPT to generate code is one of their most important use cases, developers are now demanding AI directly in their IDEs.

In March, Microsoft made one of its most significant announcements of the year when it demonstrated GitHub Copilot X, which embeds GPT-4 in the development environment. Earlier this year, Microsoft invested $10 billion into OpenAI to add to the $1 billion from 2019, cementing the partnership between the two AI heavyweights. Among other benefits, this agreement makes Azure the exclusive cloud provider to OpenAI and provides Microsoft with the opportunity to enhance its software with AI co-pilots.

Currently, under technical preview, when Copilot X eventually launches, it will integrate into Visual Studio — Microsoft’s IDE. Presented as a sidebar or chat directly in the IDE, Copilot X will be able to generate, explain, and comment on code, debug, write unit tests, and identify vulnerabilities. The “Hey, GitHub” functionality will allow users to chat using voice, suitable for mobile users or more natural interaction on a desktop.

Not to be outdone by its cloud rivals, in April, AWS announced the general availability of what it describes as a real-time AI coding companion. Amazon CodeWhisperer, integrates with a range of IDEs, namely Visual Studio Code, IntelliJ IDEA, CLion, GoLand, WebStorm, Rider, PhpStorm, PyCharm, RubyMine, and DataGrip, or natively in AWS Cloud9 and AWS Lambda console. While the preview worked for Python, Java, JavaScript, TypeScript, and C#, the general release extends support for most languages. Amazon’s key differentiation is that it is available for free to individual users, while GitHub Copilot is currently subscription-based with exceptions only for teachers, students, and maintainers of open-source projects.

The Next Step: Generative AI in Security

The next battleground for Generative AI will be assisting overworked security analysts. Currently, some of the greatest challenges that Security Operations Centres (SOCs) face are being understaffed and overwhelmed with the number of alerts. Security vendors, such as IBM and Securonix, have already deployed automation to reduce alert noise and help analysts prioritise tasks to avoid responding to false threats.

Google recently introduced Sec-PaLM and Microsoft announced Security Copilot, bringing the power of Generative AI to the SOC. These tools will help analysts interact conversationally with their threat management systems and will explain alerts in natural language. How effective these tools will be is yet to be seen, considering hallucinations in security is far riskier than writing an essay with ChatGPT.

The Future of AI Code Generators

Although GitHub Copilot and Amazon CodeWhisperer had already launched with limited feature sets, it was the release of ChatGPT last year that ushered in a new era in AI code generation. There is now a race between the cloud hyperscalers to win over developers and to provide AI that supports other functions, such as security.

Despite fears that AI will replace humans, in their current state it is more likely that they will be used as tools to augment developers. Although AI and automated testing reduce the burden on the already stretched workforce, humans will continue to be in demand to ensure code is secure and satisfies requirements. A likely scenario is that with coding becoming simpler, rather than the number of developers shrinking, the volume and quality of code written will increase. AI will generate a new wave of citizen developers able to work on projects that would previously have been impossible to start. This may, in turn, increase demand for developers to build on these proofs-of-concept.

How the Generative AI landscape evolves over the next year will be interesting. In a recent interview, OpenAI’s founder, Sam Altman, explained that the non-profit model it initially pursued is not feasible, necessitating the launch of a capped-for-profit subsidiary. The company retains its values, however, focusing on advancing AI responsibly and transparently with public consultation. The appearance of Microsoft, Google, and AWS will undoubtedly change the market dynamics and may force OpenAI to at least reconsider its approach once again.

In this Insight, guest author Anirban Mukherjee lists out the key challenges of AI adoption in traditional organisations – and how best to mitigate these challenges. “I am by no means suggesting that traditional companies avoid or delay adopting AI. That would be akin to asking a factory to keep using only steam as power, even as electrification came in during early 20th century! But organisations need to have a pragmatic strategy around what will undoubtedly be a big, but necessary, transition.”

After years of evangelising digital adoption, I have more of a nuanced stance today – supporting a prudent strategy, especially where the organisation’s internal capabilities/technology maturity is in question. I still see many traditional organisations burning budgets in AI adoption programs with low success rates, simply because of poor choices driven by misplaced expectations. Without going into the obvious reasons for over-exuberance (media-hype, mis-selling, FOMO, irrational valuations – the list goes on), here are few patterns that can be detected in those organisations that have succeeded getting value – and gloriously so!

Data-driven decision-making is a cultural change. Most traditional organisations have a point person/role accountable for any important decision, whose “neck is on the line”. For these organisations to change over to trusting AI decisions (with its characteristic opacity, and stochastic nature of recommendations) is often a leap too far.

Work on your change management, but more crucially, strategically choose business/process decision points (aka use-cases) to acceptably AI-enable.

Technical choice of ML modeling needs business judgement too. The more flexible non-linear models that increase prediction accuracy, invariably suffer from lower interpretability – and may be a poor choice in many business contexts. Depending upon business data volumes and accuracy, model bias-variance tradeoffs need to be made. Assessing model accuracy and its thresholds (false-positive-false-negative trade-offs) are similarly nuanced. All this implies that organisation’s domain knowledge needs to merge well with data science design. A pragmatic approach would be to not try to be cutting-edge.

Look to use proven foundational model-platforms – such as those for NLP, visual analytics – for first use cases. Also note that not every problem needs AI; a lot can be sorted through traditional programming (“if-then automation”) and should be. The dirty secret of the industry is that the power of a lot of products marketed as “AI-powered” is mostly traditional logic, under the hood!

In getting results from AI, most often “better data trumps better models”. Practically, this means that organisations need to spend more on data engineering effort, than on data science effort. The CDO/CIO organisation needs to build the right balance of data competencies and tools.

Get the data readiness programs started – yesterday! While the focus of data scientists is often on training an AI model, deployment of the trained model online is a whole other level of technical challenge (particularly when it comes to IT-OT and real-time integrations).

It takes time to adopt AI in traditional organisations. Building up training data and model accuracy is a slow process. Organisational changes take time – and then you have to add considerations such as data standardisation; hygiene and integration programs; and the new attention required to build capabilities in AIOps, AI adoption and governance.

Typically plan for 3 years – monitor progress and steer every 6 months. Be ready to kill “zombie” projects along the way. Train the executive team – not to code, but to understand the technology’s capabilities and limitations. This will ensure better informed buyers/consumers and help drive adoption within the organisation.

I am by no means suggesting that traditional companies avoid or delay adopting AI. That would be akin to asking a factory to keep using only steam as power, even as electrification came in during early 20th century! But organisations need to have a pragmatic strategy around what will undoubtedly be a big, but necessary, transition.

These opinions are personal (and may change with time), but definitely informed through a decade of involvement in such journeys. It is not too early for any organisation to start – results are beginning to show for those who started earlier, and we know what they got right (and wrong).

I would love to hear your views, or even engage with you on your journey!

The views and opinions mentioned in the article are personal.

Anirban Mukherjee has more than 25 years of experience in operations excellence and technology consulting across the globe, having led transformations in Energy, Engineering, and Automotive majors. Over the last decade, he has focused on Smart Manufacturing/Industry 4.0 solutions that integrate cutting-edge digital into existing operations.

Last week I wrote about the need to remove hype from reality when it comes to AI. But what will ensure that your AI projects succeed?

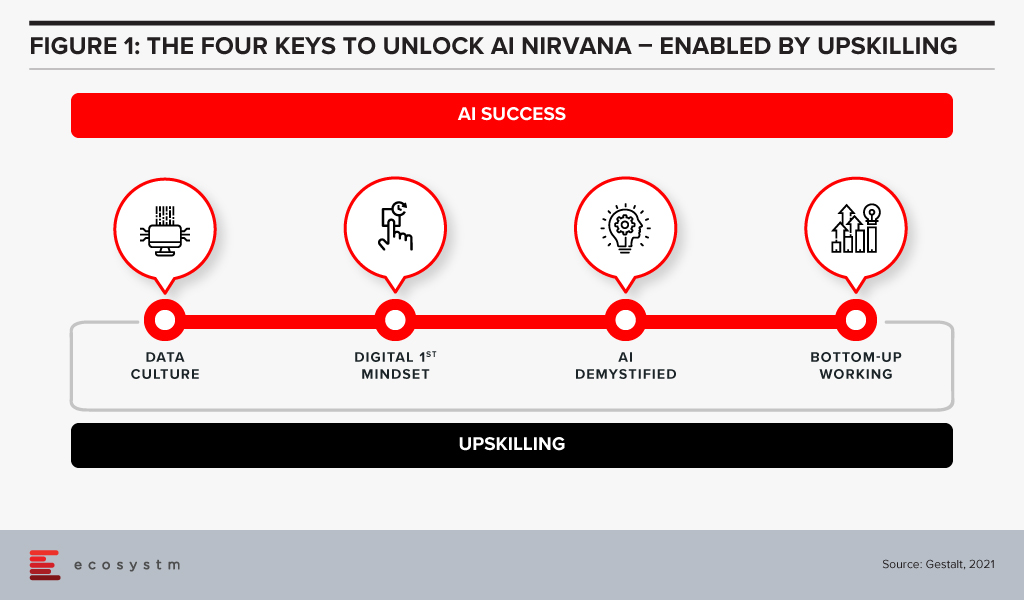

It is quite obvious that success is determined by human aspects rather than technological factors. We have identified four key organisational actions that enable successful AI implementation at scale (Figure 1).

#1 Establish a Data Culture

The traditional focus for companies has been on ensuring access to good, clean data sets and the proper use of that data. Ecosystm research shows that only 28% of organisations focused on customer service, also focus on creating a data-driven organisational culture. But our experience has shown that culture is more critical than having the data. Does the organisation have a culture of using data to drive decisions? Does every level of the organisation understand and use data insights to do their day-to-day jobs? Is decision-making data-driven and decentralised, needing to be escalated only when there is ambiguity or need for strategic clarity? Do business teams push for new data sources when they are not able to get the insights they need?

Without this kind of culture, it may be possible to implement individual pieces of automation in a specific area or process, applying brute force to see it through. In order to transform the business and truly extract the power of AI, we advise organisations to build a culture of data-driven decision-making first. That organisational mindset, will make you capable implementing AI at scale. Focusing on changing the organisational culture will deliver greater returns than trying to implement piecemeal AI projects – even in the short to mid-term.

#2 Ingrain a Digital-First Mindset

Assuming a firm has passed the data culture hurdle, it needs to consider whether it has adopted a digital-first mindset. AI is one of many technologies that impact businesses, along with AR/VR, IoT, 5G, cloud and Blockchain to name a few. Today’s environment requires firms to be capable of utilising a variety of these technologies – often together – and possessing a workforce capable of using these digital tools.

A workforce with the digital-first mindset looks for a digital solution to problems wherever appropriate. They have a good understanding of digital technologies relevant to their space and understand key digital methodologies – such as Customer 360 to deliver a truly superior customer experience or Agile methodologies to successfully manage AI at scale.

AI needs business managers at the operational levels to work with IT or AI tech teams to pinpoint processes that are right for AI. They need to make an estimation based on historical data of what specific problems require an AI solution. This is enabled by the digital-first mindset.

#3 Demystify AI

The next step is to get business leaders, functional leaders, and business operational teams – not just those who work with AI – to acquire a basic understanding of AI.

They do not need to learn the intricacies of programming or how to create neural networks or anything nearly as technical in nature. However, all levels from the leadership down should have a solid understanding of what AI can do, the basics of how it works, how the process of training data results in improved outcomes and so on. They need to understand the continuous learning nature of AI solutions, getting better over time. While AI tools may recommend an answer, human insight is often needed to make a correct decision off this recommendation.

#4 Drive Implementation Bottom-Up

AI projects need alignment, objectives, strategy – and leadership and executive buy-in. But a very important aspect of an AI-driven organisation that is able to build scalable AI, is letting projects run bottom up.

As an example, a reputed Life Sciences company embarked on a multi-year AI project to improve productivity. They wanted to use NLP, Discovery, Cognitive Assist and ML to augment clinical proficiency of doctors and expected significant benefits in drug discovery and clinical trials by leveraging the immense dataset that was built over the last 20 years.

The company ran this like any other transformation project, with a central program management team taking the lead with the help of an AI Centre of Competency. These two teams developed a compelling business case, and identified initial pilots aligned with the long-term objectives of the program. However, after 18 months, they had very few tangible outcomes. Everyone including doctors, research scientists, technicians, and administrators, who participated in the program had their own interpretation of what AI was not able to do.

Discussion revealed that the doctors and researchers felt that they were training AI to replace themselves. Seeing a tool trying to mimic the same access and understanding of numerous documents baffled them at best. They were not ready to work with AI programs step-by-step to help AI tools learn and discover new insights.

At this point, we suggested approaching the project bottom-up – wherein the participating teams would decide specific projects to take up. This developed a culture where teams collaborated as well as competed with each other, to find new ways to use AI. Employees were shown a roadmap of how their jobs would be enhanced by offloading routine decisions to AI. They were shown that AI tools augment the employees’ cognitive capabilities and made them more effective.

The team working on critical trials found these tools extremely useful and were able to collaborate with other organisations specialising in similar trials. They created the metadata and used ML algorithms to discover new insights. Working bottom-up led to a very successful AI deployment.

We have seen time and again that while leadership may set the strategy and objectives, it is best to let the teams work bottom-up to come up with the projects to implement.

#5 Invest in Upskilling

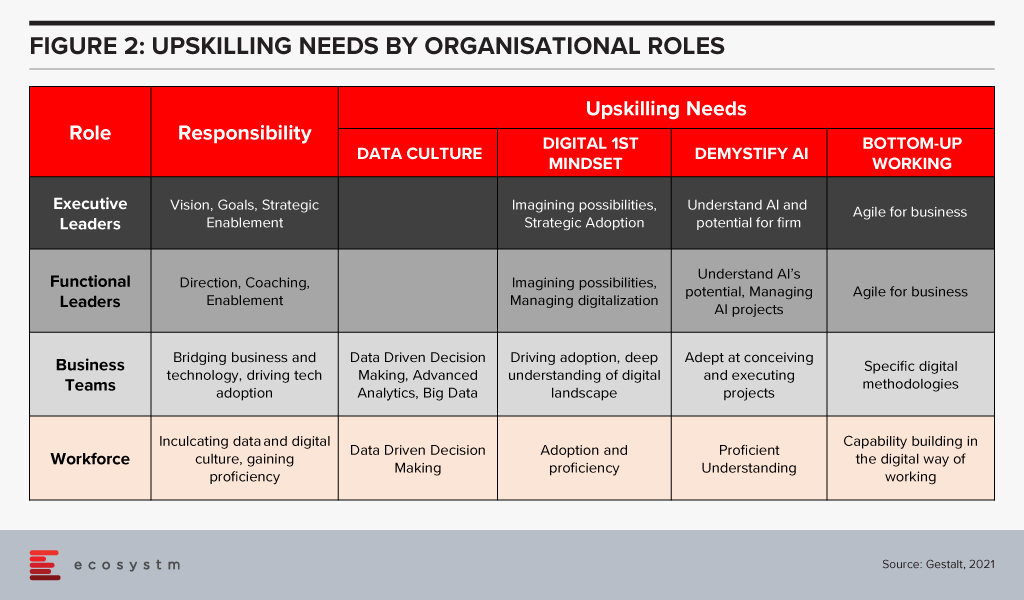

The four “keys” are important to build an AI-powered, future-proof enterprise. They are all human related – and when they come together to work as a winning formula is when organisations invest in upskilling. Upskilling is the common glue and each factor requires specific kinds of upskilling (Figure 2).

Upskilling needs vary by organisational level and the key being addressed. The bottom line is that upskilling is a universal requirement for driving AI at scale, successfully. And many organisations are realising it fast – Bosch and DBS Bank are some of the notable examples.

How much is your organisation invested in upskilling for AI implementation at scale? Share your stories in the comment box below.

Written with contributions from Ravi Pattamatta and Ratnesh Prasad

SAS announced that it has acquired Boemska, a provider of low-code development tools and analytics workload management software. The small, privately held company is UK-based with an R&D centre in Serbia. The acquisition will be integrated into SAS Viya, its cloud-native platform, which includes containerised analytics and machine learning offerings. Terms of the deal have not been disclosed.

A SAS silver partner, Boemska has wins in Health, Finance, and Travel. Most of its reference clients are based in Europe in addition to a small number in the US and South Africa. Boemska has two primary software offerings – Enterprise Session Monitor (ESM) and AppFactory. Additionally, it delivers cloud migration, performance diagnostics, and application development services.

Boemska Capabilities

Boemska ESM provides visibility into performance and cost management of analytics workloads. The product enables self-service root cause analysis for developers, monitoring and batch schedule optimisation for administrators, and departmental cost allocation of cloud resources. ESM manages SAS, R, and Python workloads and is compatible with workload management platforms from the likes of IBM and BMC. Boemska shipped an updated version of ESM in 2020 to improve the UI and ensure support for SAS Viya. At the time, it announced that its development team had doubled in the preceding 12 months, suggesting a trajectory of growth.

AppFactory is a low-code development platform for data scientists and data engineers using SAS, which generates JavaScript for front-end developers along with data transport, authentication, and exception handling. SAS emphasises the portability of apps that can be created and run on mobile and IoT devices. Examples provided include machine learning and event alerts in healthcare wearables, video-based defect identification in Manufacturing, and drone-based asset monitoring in Utilities. Boemska states that its low-code offering seeks to bridge the “last mile of analytics” by putting insights into the hands of decision-makers.

SAS Focuses on Cloud-Native Analytics and AI

SAS launched Viya 4.0 in mid-2020, a major step in its vision to become a provider of cloud-native analytics and machine learning solutions. The platform includes offerings, such as Visual Analytics, Visual Statistics, Visual Machine Learning, and Visual Data Science packaged in containers and orchestrated by Kubernetes. Microsoft Azure has become its preferred cloud partner, assisting in developing SAS Cloud, hosted from data centres in the US, Brazil, Australia, and newly launched facilities in Germany and the UK. Viya managed services are also available from Azure regions. AWS and Google Cloud are expected to make the leap to Viya 4.0 from version 3.5 soon. As part of its cloud-native strategy, SAS now offers three tiers for software updates – bi-annual, monthly, or immediately after release.

Ecosystm Comment

The major overhaul of SAS Viya is part of the vendor’s USD 1B investment into AI over three years from 2019-2021. The platform includes a heavy emphasis on NLP, machine learning, and computer vision. The integration of Boemska’s low-code development offering into Viya will allow SAS clients to extract greater value from AI by quickly embedding it in mobile and enterprise applications. The converging trends of citizen developers and data literacy suggest SAS has selected the right path for the future.

Download Ecosystm Predicts: The Top 5 AI & Automation Trends for 2021

Download Ecosystm’s complimentary report detailing the top 5 AI trends in 2021 and what innovations they bring to the table that IT leaders should monitor. Create your free account to access all the Ecosystm Predictions for 2021, and many other reports, on the Ecosystm platform

Organisations are finding that the ways to do work and conduct business are evolving rapidly. It is evident that we cannot use the perspectives from the past as a guide to the future. As a consequence both leaders and employees are discovering and adapting both their work and their expectations from it. In general, while job security concerns still command a big mindshare, the simpler productivity measures are evolving to more nuanced wellness measures. This puts demands on the CHRO and the leadership team to think about company, customer and people strategy as one holistic way of working and doing business.

Organisations will have to re-think their people and technology to evolve their Future of Work policies and strategise their Future of Talent. There are multiple dimensions that will require attention.

Hybrid is Becoming Mainstream

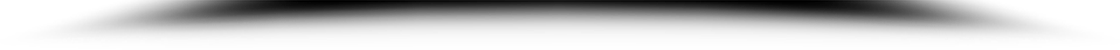

It is clear that hybrid workplaces are here to stay. Ecosystm research finds that in 2021 BFSI organisations will use more collaboration tools and platforms, and virtual meetings (Figure 1). Nearly 40% expect more employees to work from home, but only about a quarter of organisations are looking to reduce their physical workspaces. Organisations will give more choice to employees in the location of their work – and employees will choose to work from where they are more productive. The Hybrid model will be more mainstream than it has been in the last few months.

Companies are coming to terms with the fact that there is no single answer to operating in the new world. Experimentation and learnings are continuously captured to create the right workforce and workplace model that works best. Agility both in terms of being able to undersand the market as well as quickly adapt is becoming quite important. Thus being able to use different models and ways of working at the same time is the new norm.

Technology and Talent are Core

Talent and tech are the two core pillars that companies need to look at to be successful against their competition. It is becoming imperative to create synergy between the two to deliver a superior value proposition to customers. Companies that are able to bring the customer and employee experience journeys together will be able to create better value. HR tech stacks need to evolve to be more deliberate in the way they link the employee experience, customer experience, and the culture of the organisation. That’s how the Employee Value Proposition (EVP) comes to life on a day-to-day basis to the employers. With evolving work models, the tech stack is a key EVP pillar.

Governments will also need to partner with industry to make such talent available. Singapore is rolling out a new “Tech.Pass” to support the entry of up to 500 proven founders, leaders and experts from top tech companies into Singapore. Its an extension of the Tech@SG program launched in 2019, to provide fast-growing companies greater assurance and access to the talent they need. The EDB will administer the pass, supported by the Ministry of Manpower.

Attracting the Right Talent

Talent has always been difficult to find. Even with globalisation, significant investment of time and resources is needed to find and relocate talent to the right geography. In many instances this was not possible given the preferences of the candidates and/or the hiring managers. COVID-19 has changed this drastically. Remote working and distributed teams have become acceptable. With limitations on immigration and travel for work, there is a lot more openness to finding and hiring talent from outside the traditional talent pool.

However it is not as simple as it seems. The cost per applicant (CPA) – the cost to convert a job seeker to a job applicant – had been averaging US$11-12 throughout 2019 according to recruiting benchmark data from programmatic recruitment advertising provider, Appcast. But, the impact of COVID-19 saw the CPA reach US$19 in June – a 60% increase. I expect that finding right talent is going to be a “needle in a haystack” issue. But this is only one side of the coin – the other aspect is that the talent profile needed to be successful in roles that are all remote or hybrid is also significantly different from what it was before. Companies need to pay special attention to what kind of people they would like to hire in these new roles. Without this due consideration it is very likely that there would be difficulty in on-boarding and making these new hires successful within the organisation.

Automation Augmentation and Skills

The pace at which companies are choosing to automate or apply AI is increasing. This is changing the work patterns and job requirements for many roles within the industry. According to the BCG China AI study on the financial sector 23% of the roles will be replaced by AI by 2027. The roles that will not be replaced will need a higher degree of soft skills, critical thinking and creativity. However, automation is not the endgame. Firms that go ahead with automation without considering the implications on the business process, and the skills and roles it impacts will end up disrupting the business and customer experience. Firms will have to really design their customer journeys, their business processes along with roles and capabilities needed. Job redesign and reskilling will be key to ensuring a great customer experience

Analytics is Inadequate Without the Right Culture

Data-driven decision-making as well as modelling is known to add value to business. We have great examples of analytics and data modelling being used successfully in Attrition, Recruitment, Talent Analytics, Engagement and Employee Experience. The next evolution is already underway with advanced analytics, sentiment analysis, organisation network analysis and natural language processing (NLP) being used to draw better insights and make people strategies predictive. Being able to use effective data models to predict and and draw insights will be a key success factor for leadership teams. Data and bots do not drive engagement and alignment to purpose – leaders do. Working to promote transparency of data insights and decisions, for faster response, to champion diversity, and give everyone a voice through inclusion will lead to better co-creation, faster innovation and an overall market agility.

Creating a Synergy

We are seeing a number of resets to what we used to know, believe and think about the ways of working. It is a good time to rethink what we believe about the customer, business talent and tech. Just like customer experience is not just about good sales skills or customer service – the employee experience and role of Talent is also evolving rapidly. As companies experiment with work models, technology and work environment, there will a need to constantly recalibrate business models, job roles, job technology and skills. With this will come the challenge of melding the pieces together within the context of the entire business without falling into the trap of siloed thinking. Only by bringing together businesses processes, talent, capability evolution, culture and digital platforms together as one coherent ecosystem can firms create a winning formula to create a competitive edge.

Singapore FinTech Festival 2020: Talent Summit

For more insights, attend the Singapore FinTech Festival 2020: Infrastructure Summit which will cover topics on Founders success and failure stories, pandemic impact on founders and talent development, upskilling and reskilling for the future of work.

In 2020, much of the focus for organisations were on business continuity, and on empowering their employees to work remotely. Their primary focus in managing customer experience was on re-inventing their product and service delivery to their customers as regular modes were disrupted. As they emerge from the crisis, organisations will realise that it is not only their customer experience delivery models that have changed – but customer expectations have also evolved in the last few months. They are more open to digital interactions and in many cases the concept of brand loyalty has been diluted. This will change everything for organisations’ customer strategies. And digital technology will play a significant role as they continue to pivot to succeed in 2021 – across regions, industries and organisations.

Ecosystm Advisors Audrey William, Niloy Mukherjee and Tim Sheedy present the top 5 Ecosystm predictions for Customer Experience in 2021. This is a summary of the predictions – the full report (including the implications) is available to download for free on the Ecosystm platform.

The Top 5 Customer Experience Trends for 2021

- Customer Experience Will Go Truly Digital

COVID-19 made the few businesses that did not have an online presence acutely aware that they need one – yesterday! We have seen at least 4 years of digital growth squeezed into six months of 2020. And this is only the beginning. While in 2020, the focus was primarily on eCommerce and digital payments, there will now be a huge demand for new platforms to be able to interact digitally with the customer, not just to be able to sell something online.

Digital customer interactions with brands and products – through social media, online influencers, interactive AI-driven apps, online marketplaces and the like will accelerate dramatically in 2021. The organisations that will be successful will be the ones that are able to interact with their customers and connect with them at multiple touchpoints across the customer journey. Companies unable to do that will struggle.

- Digital Engagement Will Expand Beyond the Traditional Customer-focused Industries

One of the biggest changes in 2020 has been the increase in digital engagement by industries that have not traditionally had a strong eye on CX. This trend is likely to accelerate and be further enhanced in 2021.

Healthcare has traditionally been focused on improving clinical outcomes – and patient experience has been a byproduct of that focus. Many remote care initiatives have the core objective of keeping patients out of the already over-crowded healthcare provider organisations. These initiatives will now have a strong CX element to them. The need to disseminate information to citizens has also heightened expectations on how people want their healthcare organisations and Public Health to interact with them. The public sector will dramatically increase digital interactions with citizens, having been forced to look at digital solutions during the pandemic.

Other industries that have not had a traditional focus on CX will not be far behind. The Primary & Resources industries are showing an interest in Digital CX almost for the first time. Most of these businesses are looking to transform how they manage their supply chains from mine/farm to the end customer. Energy and Utilities and Manufacturing industries will also begin to benefit from a customer focus – primarily looking at technology – including 3D printing – to customise their products and services for better CX and a larger share of the market.

- Brands that Establish a Trusted Relationship Can Start Having Fun Again

Building trust was at the core of most businesses’ CX strategies in 2020 as they attempted to provide certainty in a world generally devoid of it. But in the struggle to build a trusted experience and brand, most businesses lost the “fun”. In fact, for many businesses, fun was off the agenda entirely. Soft drink brands, travel providers, clothing retailers and many other brands typically known for their fun or cheeky experiences moved the needle to “trust” and dialed it up to 11. But with a number of vaccines on the horizon, many CX professionals will look to return to pre-pandemic experiences, that look to delight and sometimes even surprise customers.

However, many companies will get this wrong. Customers will not be looking for just fun or just great experiences. Trust still needs to be at the core of the experience. Customers will not return to pre-pandemic thinking – not immediately anyway. You can create a fun experience only if you have earned their trust first. And trust is earned by not only providing easy and effective experiences, but by being authentic.

- Customer Data Platforms Will See Increased Adoption

Enterprises continue to struggle to have a single view of the customer. There is an immense interest in making better sense of data across every touchpoint – from mobile apps, websites, social media, in-store interactions and the calls to the contact centre – to be able to create deeper customer profiles. CRM systems have been the traditional repositories of customer data, helping build a sales pipeline, and providing Marketing teams with the information they need for lead generation and marketing campaigns. However, CRM systems have an incomplete view of the customer journey. They often collect and store the same data from limited touchpoints – getting richer insights and targeted action recommendations from the same datasets is not possible in today’s world. And organisations struggled to pivot their customer strategies during COVID-19. Data residing in silos was an obstacle to driving better customer experience.

We are living in an age where customer journeys and preferences are becoming complex to decipher. An API-based CDP can ingest data from any channel of interaction across multiple journeys and create unique and detailed customer profiles. A complete overhaul of how data can be segregated based on a more accurate and targeted profile of the customer from multiple sources will be the way forward in order to drive a more proactive CX engagement.

- Voice of the Customer Programs Will be Transformed

Designing surveys and Voice of Customer programs can be time-consuming and many organisations that have a routine of running these surveys use a fixed pattern for the data they collect and analyse. However, some organisations understand that just analysing results from a survey or CSAT score does not say much about what customers’ next plan of action will be. While it may give an idea of whether particular interactions were satisfactory, it gives no indication of whether they are likely to move to another brand; if they needed more assistance; if there was an opportunity to upsell or cross sell; or even what new products and services need to be introduced. Some customers will just tick the box as a way of closing off a feedback form or survey. Leading organisations realise that this may not be a good enough indication of a brand’s health.

Organisations will look beyond CSAT to other parameters and attributes. It is the time to pay greater attention to the Voice of the Customer – and old methods alone will not suffice. They want a 360-degree view of their customers’ opinions.